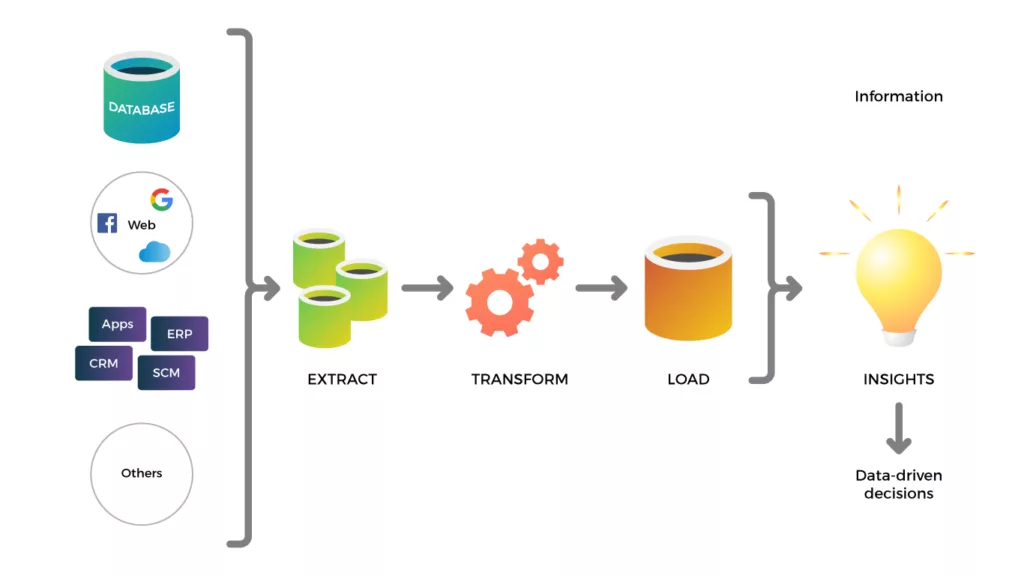

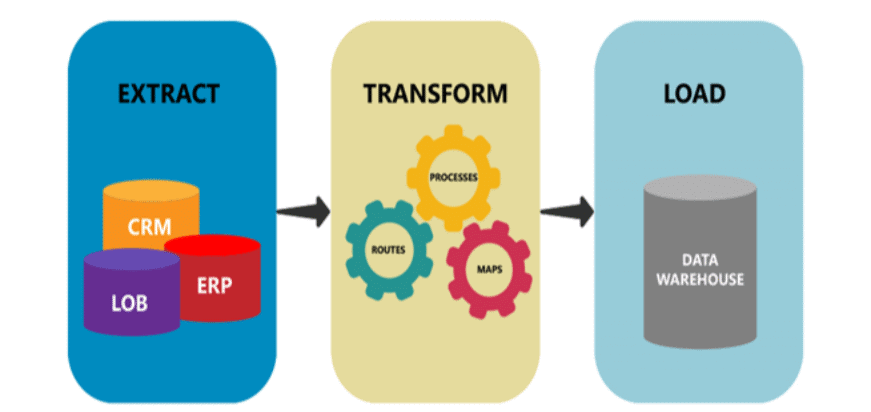

ETL stands for Extract, Transform, and Load. ETL tools refer to what appear to be computer programs that help extract data from sources, transform the data into a form suitable for analysis, and then load the data into targets such as databases and data warehouses.

Because data warehousing and business intelligence tools use ETL tools to help move data from various input points to a central repository, these tools are used extensively in data exploration and analysis. ETL tools can process various types of data, including structured and unstructured data from tables, flat files, and application logs, as well as data from Web APIs and other sources.

Examples of ETL tools include Talend, Skyvia, and Apache Nifi. ETL tools are typically used by data engineers and data analysts to extract, transform, and load data from disparate sources into a central repository for analysis and reporting.

NOTE: If you are looking for the best ETL tool for your organization, we recommend that you read our article – List of Best ETL Tools in 2023

Why you need an ETL tool

First reason. The best way for an organization to move into the new world of big data is to use ETL tools. One of the greatest benefits of data processing tools is that they help automate and streamline data pipeline processes. There are many reasons to invest in an ETL tool. The biggest benefit is reduced time spent on manual processes such as coding and mapping data to target systems.

Second reason. If you want to handle complex data tasks, ETL tools are the best option. In addition, ETL tools are the best way to handle complex data management tasks. Artificial intelligence and machine learning mean that organizations are mining a greater volume and variety of data than ever before.

Data sources are now much more distributed than ever before. While data collected through the Internet of Things can increase the speed of analytics, it is important to understand that big data is a moving target. With cloud ETL tools, organizations don’t have to struggle to keep up. They can manage their data and data processing needs, regardless of the volume or frequency of data.

Finally, data governance requires data stewardship. GDPR is the acronym for the General Data Protection Regulation, an EU law designed to protect the privacy of users. The right data governance processes and standardization of data workflows help organizations comply with data regulations and requirements.

Data quality means that companies and organizations have developed reliable, trustworthy, and accurate data. There are many different types of data quality tools available today. Enterprise Data Quality (EDQ) is a framework that helps implement best practices and processes to achieve more effective data quality, while Enterprise Data Governance (EDG) helps establish tools and practices.

ETL tool options

Although ETL tools come in many flavors, not all are built for the modern data environment. Today’s organizations need tools that are flexible and fast enough to adapt to change. They should also support a wide variety of use cases. Some of the ETL tools that have been used in data landscapes over the past few years include

Established or legacy ETL tools:

As data integration tools, these tools are still useful. They’re slower, less flexible, and less robust than modern options. There are many different types of tools, many of which are code-intensive and lack automation (especially for real-time deployments) compared to other choices.

Open Source ETL Tools:

You’ll love this one. Open-source ETL tools are much more adaptable than their legacy counterparts. There are different data formats, and it depends on what data you have, how old the data is, or even if it’s free. These tools are faster than most legacy tools. They’re also open source, so they’re faster and cheaper than their proprietary counterparts.

Cloud-based ETL tools:

It’s hard to say whether it’s better to do data analysis yourself or use a cloud-based ETL tool. These tools are the best option for dealing with hybrid cloud data sources.